What is Automated Mask Refinement?

We have a myriad of annotation tools available for you to label your data directly on Nexus, from bounding boxes, to free draw, to our own AI-assisted annotator, IntelliBrush. However, use cases can require fine precision during mask prediction. To better enable this level of precision for annotation and prediction, we are now offering a machine learning backed automated mask refinement tool to push for pixel-perfect training data.

How Does Automated Mask Refinement Work?

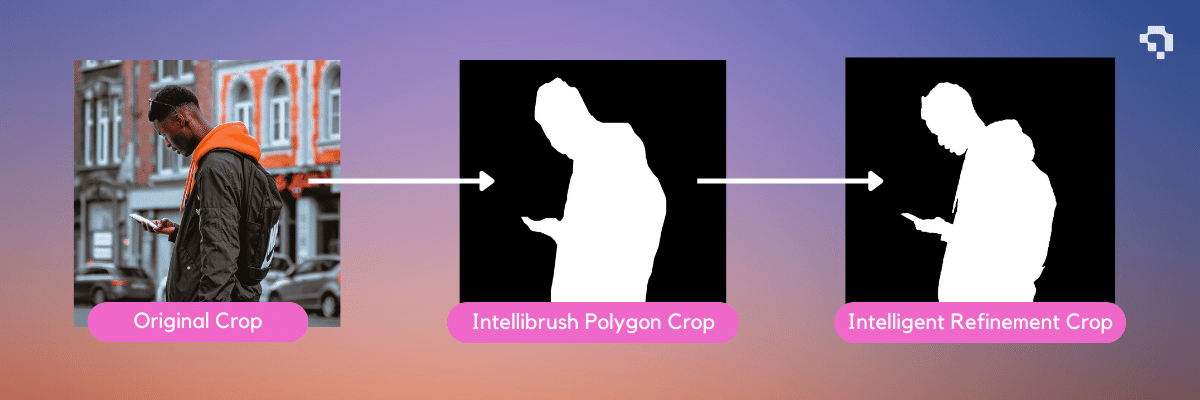

Through the power of machine learning, the tool first looks at the initial polygon mask and the image that it is annotating over. It then determines the importance of features in and around the original mask in proportion to the scale of the object to the rest of the image, and then makes a consensus vote to add or remove pixels.

Currently, there are a few mask refinement libraries that can be used to help refine pre-existing masks. One popular example is the PointRend library, which is a trainable model that can be attached on top of detection models to produce better quality masks. It takes both coarse and fine-grained features from the detection networks, and then makes classifications on randomly selected points in progressively upsampled regions of uncertainty.

Thus, PointRend is able to make increasingly detailed classification for uncertain regions and produce a crisp mask boundary. As PointRend makes classifications between different classes, it must be finetuned with the intended dataset to fully leverage its refinement capabilities.

What are the Benefits of Automated Mask Refinement?

Automated mask refinement gives users the option to adapt imprecise masks to crisp ones that adapt to local features with just a click of the button. Manual mask annotation is tedious, detail-oriented, and time-consuming. If you have ever been through the frustrating and drawn-out experience of trying to fix mask boundaries to cover or remove features on objects one vertex at a time, you will definitely see the value in aligning mask boundaries to prominent features in the object at pixel perfect levels rapidly and without any effort.

More accurate masks will also provide more accurate input information for models to train on, allowing the model to train on better data. Even if your current mask annotations cover a large majority or fully cover the object, mask boundaries are often very useful input information for models to make accurate predictions. More boundary imprecision gives more variability and increases the difficulty for the model to recognize those class instances. Thus, the more detailed your mask annotation boundaries are, the more information your model receives to make finer differentiations between different objects. This will help improve model performance and precision in prediction.

How Does Automated Mask Refinement Work on Datature?

AI Edge Refinement will be another addition to our Intelligent Tools. During your IntelliBrush annotation process, there will be an option on the left sidebar to further refine the currently displayed mask. You can also press X as well for the same effect. After a short period of time, the refined mask will be displayed on the screen. If you think the refinement is a suitable improvement, you can commit the change. If not, you can always revert the refinement to go back to your original IntelliBrush mask. As suggested usage, you should select the mask refinement when you are satisfied that the IntelliBrush mask captures the general shape and covers the object entirely but you want to refine the boundaries to align with the object more precisely.

There are a few points to note about automatic refinement. As it is a mask agnostic tool, this also means that it doesn’t take any explicit information from previous tools, such as the positive or negative clicks used in Intellibrush. It automatically finds what it believes to be the most relevant and closest features based on the mask and the image. Therefore, you may find that the refinement can sometimes be resistant to covering certain spots if the model believes that the features are too different from the rest of the mask.

Mask refinement will also be considered usage of an Intelligent Tool, so each approved usage of mask refinement will take one IntelliBrush credit, separately of the IntelliBrush credit used to make the IntelliBrush annotation.

Example of Mask Refinement:

.gif)

Want to Get Started?

Datature makes it easy to try out the IntelliBrush and Refinement tools. We offer 500 free IntelliBrush credits on a free Starter account for you to play with the Intelligent Tools.

After you’ve created an account, and made these pixel-perfect annotations with our tools, you can now try training your own instance segmentation model to see how annotation quality can impact output predictions.

Our Developer’s Roadmap

We have further goals to continue improving the quality and comprehensiveness of our annotation toolkit. We want to help facilitate the usage of refinement on previously made masks, regardless of the method that was used to create them. We plan to introduce more capabilities to improve the smoothness of mask boundaries to create more natural alignments with shapes in the image. Additionally, we plan to create more interaction in tool usage to better integrate the usage of all our annotation tools, such as creating a new annotation and using other tools like Refinement in tandem, or furthering individual annotation capabilities.

Need Help?

If you have questions, feel free to join our Community Slack to post your questions or contact us about how Mask Refinement fits in with your usage.

For more detailed information about the Mask Refinement functionality, customization options, or answers to any common questions you might have, read more about the annotation process on our Developer Portal.

.png)

.png)

.png)